Enabling the Industry's Best ML Customer Experience

The Goal

In my last blog, I recounted the journey to help define an innovative methodology to scaling ML at the embedded edge. In my career I have looked at this for over 20 years and the technology basis for the embedded edge market architecture has not really innovated, still doing DSP with optimized assembly programming, focusing on doing load store coding. The need for ML at the embedded edge has definitely increased not only in the cloud and IoT elements but definitely the embedded edge element of the cloud story. The need for ML is there, but the embedded technology is stodgy without any much innovation or technology refresh in a long time. The embedded technology basis hasn’t changed at all but the need for ML has definitely increased.

The observations of the need for ML is there, for stodgy old markets that haven’t seen a technology refresh in a long time, who could really benefit from a company who could take on the challenge and build a purpose built ML solution for this market. There’s enough proof points of people doing something in ML and we saw a larger market opportunity to build a software centric purposeful platform that could really enable the scaling of machine learning at the embedded edge. So that became the thesis behind why we decided to do what we did. The opportunity to address this unserved market of $40 billion in annual consumption with it’s traditional large market cap companies who day in and day out haven’t really introduced ML in a meaningful way provides the opportunity for a brand new player to really come in and create an MLSoC environment that could scale this for the embedded edge market.

Our goal at SiMa.ai is to scale embedded edge machine learning, so that everybody should be able to deploy ML on a massive scale whether it’s in Autonomous vehicles, UAVs, Robotics or in Industry 4.0. This has been a trend that has been talked about for the last 10 years, but if you really look hard at the number of volume deployments, little has happened. The large core market opportunity and current frustration in deploying ML at the embedded edge is why we believe the industry is moving towards the realization we came to as we were building a company, we really need to take a very different approach and build something new for the embedded edge ML market.[

Our Focus

The second observation that we saw was ML is clearly well deployed in two end products, the first being the cloud and the other in mobile. However, it’s a very concentrated business of mostly advertisements and recommendation engines in the cloud and really a very targeted ML experience in NLP facial recognition or social media filters for mobile. People in both camps have an army of ML people inside, they know the use cases, they know what really needs to be done, these companies can afford to take on internal development. The thing that we came to recognize with the embedded market is that there are no big sockets, there’s no one large customer that’s going to move the needle, so we had no choice but to be able to support a thousand plus customers to really scale machine learning and really bring the experience to them. We discovered that these customers have the desire for ML but they don’t have the capacity to get the learning experience because they lack the internal capacity to build up and they don’t have the internal fundamental knowledge base. So they want to implement the ML experience but to do so without the embedded edge learning curve and what it really quickly came to is that we have to make this ML experience very effortless for customers.

Only Company to Solve all Three

The original thesis for the company is you push a button and you get a WOW! The experience really needs to be abstracted to a point where you want to get thousands of developers to use it, but you don’t want to require them to all be ML geniuses, you don’t want them all to be tweaking layer by layer hand coding to get desired performance, you want them to stay at the highest level of abstraction and meaningfully quickly deploy effortless ML. So the thesis behind why we latched on this was a very strong correlation with scaling in that it really needs to be an effortless ML experience and not require a lot of hand holding and services engagement that will get in the way of scaling.

We spent the first year visiting 50 plus customers globally trying to understand, if all of you want ML but you’re not deploying it. Why? What comes in the way of you meaningfully deploying ML and or what’s required to really push ML into a scale deployment and it really comes down to three key pillars of understanding, the first being ANY. As a company we have to solve problems given the breadth of customers, and the breadth of use models along with the disparity between the ML networks, the sensors,the frame rate, the resolution. It is a very disparate world where each market has completely different front end designs and if we really just take a narrow slice of it we cannot economically build a company, we really have to create a funnel that is capable of taking in a very wide range of application spaces, almost think of the funnel as the Ellis Island of everything computer vision. People could be in tensorflow, they could be using Python, they could be using camera sensor with 1080 resolution or it could be a 4K resolution sensor, it really doesn’t matter if we can homogenize and bring them all and if you don’t have the front end like this then you don’t have a scalable company.

The second pillar is 10x which means that there’s also the problem why customers are not able to deploy and create derivative platforms because everything is a go back to scratch to build up a new model or pipeline. The second challenge is no doubt as a startup we need to bring something very exciting, very compelling where anybody and everybody is willing to take the risk even if you’re a startup based on a 10x performance metric. The one key technical merit we focus on solving for in computer vision problems is the frames per second per watt metric. We need to be illogically better than anybody else so that we can stay a generation or two ahead, so we took this as part of our software centric approach. That approach created a heterogeneous compute platform so people can solve the entire computer vision pipeline in a single chip and deliver at 10x compared to any other solutions. The third pillar of Pushbutton is driven by the need to scale ML at the embedded edge in a meaningful way. ML tool chains are very nascent, frequently broken, no single company has really built a world class ML software experience. We further recognized that for the embedded market it’s important to mask the complexity of the embedded code while also giving them an iterative process to quickly come back and update and optimize their platforms. Customers really need a pushbutton experience that gives them a response or a solution in minutes versus in months to achieve effortless ML. Any, 10x, and pushbutton are the key value propositions that became really clear for us that if we do a bang up job on these three things we will absolutely move the needle on effortless ML and scaling ML at the embedded edge.

ML Software Done Right

So Palette addresses ML developer’s steep learning curve by avoiding the arcane practice of embedded programming. Palette software is a unified suite of tools, functioning much like an ML developer’s familiar cloud equivalent environments, with pushbutton software commands to create, build and deploy on multiple devices. Palette can manage all dependencies and configuration issues within a container environment while securely communicating to the edge devices. This approach still enables embedded programmers flexibility to perform low level optimization of the code. The reaction from our ML developers was “This is exactly what I am looking for!”

Palette is the delivery mechanism for Any, 10x, and Pushbutton.

Any:

Our ML compiler front end leverages open source Tensor Virtual Machine (TVM) framework, and thus supports the industry’s widest range of ML models and ML frameworks for computer vision.

10x:

Our compiler and patented static scheduling approach eliminates stalls, minimizes caching and improves utilization of our ultra dense machine learning tiled architecture. This combination produces not only a high TOPS/watt rating, our FPS/watt efficiency is 10-40x times better than competing solutions that often resort to hand coded solutions.

Pushbutton:

We designed our innovative software front-end to automatically partition and schedule your entire application across all of the MLSoC™ compute subsystems. We created a suite of specialized and generalized optimization and scheduling algorithms for our back-end compiler. These algorithms automatically convert your ML network into highly optimized assembly code that runs on the Machine Learning Accelerator (MLA). No manual intervention needed for improved performance.

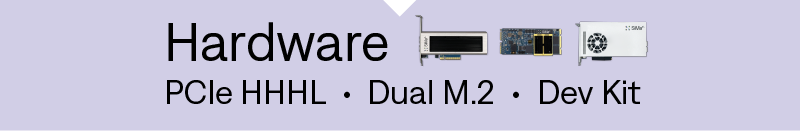

Our Boards

We recognize in this industry there is little capacity to take silicon chips and deliver fully deployed systems on their own, the majority of the customers want board solutions, often they don’t have time to run board spins and qualify them until higher volumes, for initial productivity they need something effortless. SiMa.ai has built two industry standard board form factors, PCIe HHHL and Dual M.2, we’re deploying them and we will be doing more ruggedized platforms so we can continue to accelerate our customers and move to deploy systems while staying at a board level. There will be some high volume runners who will use the MLSoC chip and design their own form factor boards as well as partners that are targeting specific markets. We’re also enabling them a quicker time to market with board design packages (gerber files) wrapped with our software experience so that they can use our off-the shelf boards short term while they qualify their board designs, often using what we have to customize.

Machine Learning System on Chip (MLSoC)

The MLSoC is the underlying engine that really enables all of this, it is important to differentiate that we are not building an ML accelerator. For the 2 billion dollars invested into edge ML SoC startups, everybody’s industry response for innovation has been an ML accelerator block as a core or a chip. What people are not recognizing is to migrate people from a classic SoC to an ML environment you need an MLSoC environment so people can run legacy code from day one and gradually in a phased risk mitigated way deploy their capability into an ML component or one day they’re doing semantic segmentation using a classic computer vision approach and the next day they could do it using an ML approach but one way or the other we allow our customers the opportunity to deploy and partition their problem as they deem fit using classic computer vision, classic ARM processing of systems, or a heterogeneous ML compute. To us ML is not an end product and therefore an ML accelerator is not going to be successful on its own, ML is a capability and it’s a toolkit in addition to the other tools we enable our customers so that using a push button methodology, they can iterate their design of pre-processing, post-processing, analytics, and ML acceleration all on a single platform while delivering the highest system wide application performance at the lowest power.

Building on a solid foundation

What we have created at SiMa.ai is no doubt in my mind a billion dollar business model. We continue to execute and pioneer with a foundation that is customer experience first, supported by superior technology that will fuel the next wave of innovation at the embedded edge.