Machine Learning. Effortless.

OUR TECHNOLOGY

So you need to add ML to your product. Simple, right? Wrong. ML solutions weren’t purpose built for the embedded edge — everything you find is adapted for it. It’s like putting a square peg in a round hole. At SiMa.ai, we are simplifying the process of adding ML to your products by delivering the first software-centric purpose-built MLSoC™ platform with push-button performance for Effortless ML deployment and scaling at the embedded edge so you can get your products to market faster.

Is Your ML Green?

- SiMa.ai is the MLSoC leader in the MLPerf power efficiency category (closed power) for the edge.

- This peer reviewed result identifies SiMa.ai as a leader in efficient ML at the edge.

- Performing ML at the edge has significant power efficiencies over performing ML in the datacenter.

- The power consumption to transmit and store the data in the datacenter can exceed the power to perform the ML calculations in the cloud.

- This provides a multiplicative effect on the edge ML power efficiency ratings.

What we focus on

Whether you’re building smarter drones, more intelligent robots, or autonomous systems, SiMa.ai can help get you there.

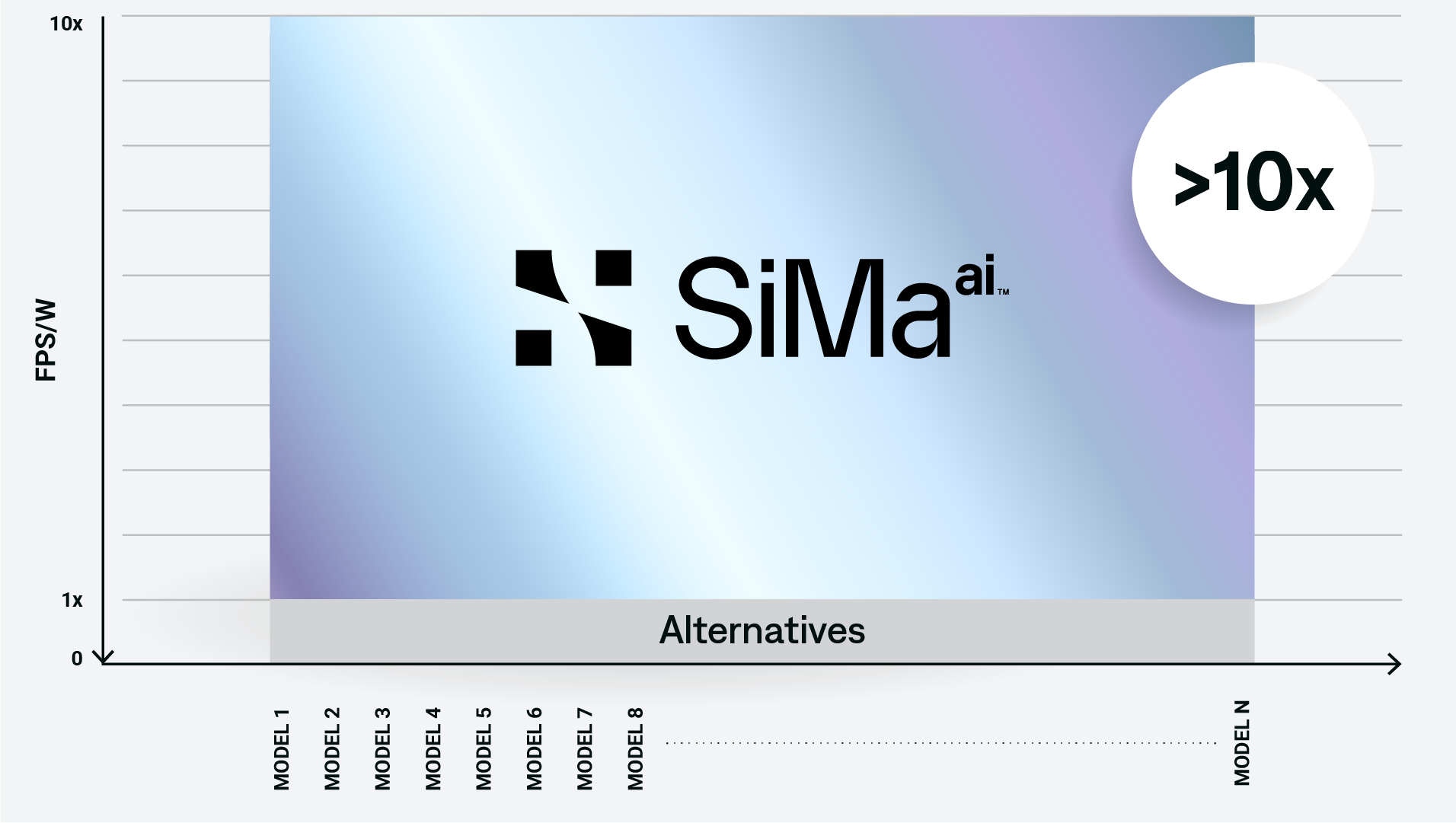

10x better than alternatives

Amazing power efficiency. Blazing-fast processing. Get immediate results that are 10x better performance per watt than the competition. Enables real-time ML decision making while addressing performance, space, power, and cooling requirements. The SiMa.ai MLSoC™ provides the best performance per watt because it’s purpose-built for the embedded edge market, not adapted for it.

HERE’S HOW WE DO IT

To address customer problems and achieve best-in-class performance per watt, we knew a software-centric machine learning solution that works in conjunction with a new innovative hardware architecture would be required.

Our radically new architecture leverages a combination of hardware and software to precisely schedule all computation and data movement ahead of time, including internal and external memory to minimize wait times.

We designed only the essential hardware blocks required for deep learning operations and put all necessary intelligence in software, while including hardware interfaces to support a wide variety of sensors.

Any computer vision application

Whether you’re building smarter drones, intelligent robots, or autonomous systems, the SiMa.ai platform allows you to quickly and easily run any neural network model from any framework on any sensor with any resolution for any computer vision application. Run your applications, as is, right now on our MLSoC™.

HERE’S HOW WE DO IT

Our MLSoC Software Development Kit (SDK) is designed to run any of your computer vision applications seamlessly for rapid and easy deployment .

Our ML compiler front end leverages open source Tensor Virtual Machine (TVM) framework, and thus supports the industry’s widest range of ML models and ML frameworks for computer vision.

Bring your own model (BYOM) or choose one of our many pre-built and optimized models. SiMa.ai’s software tools will allow you to prototype, optimize, and deploy your ML model in three easy steps.

Push-button results

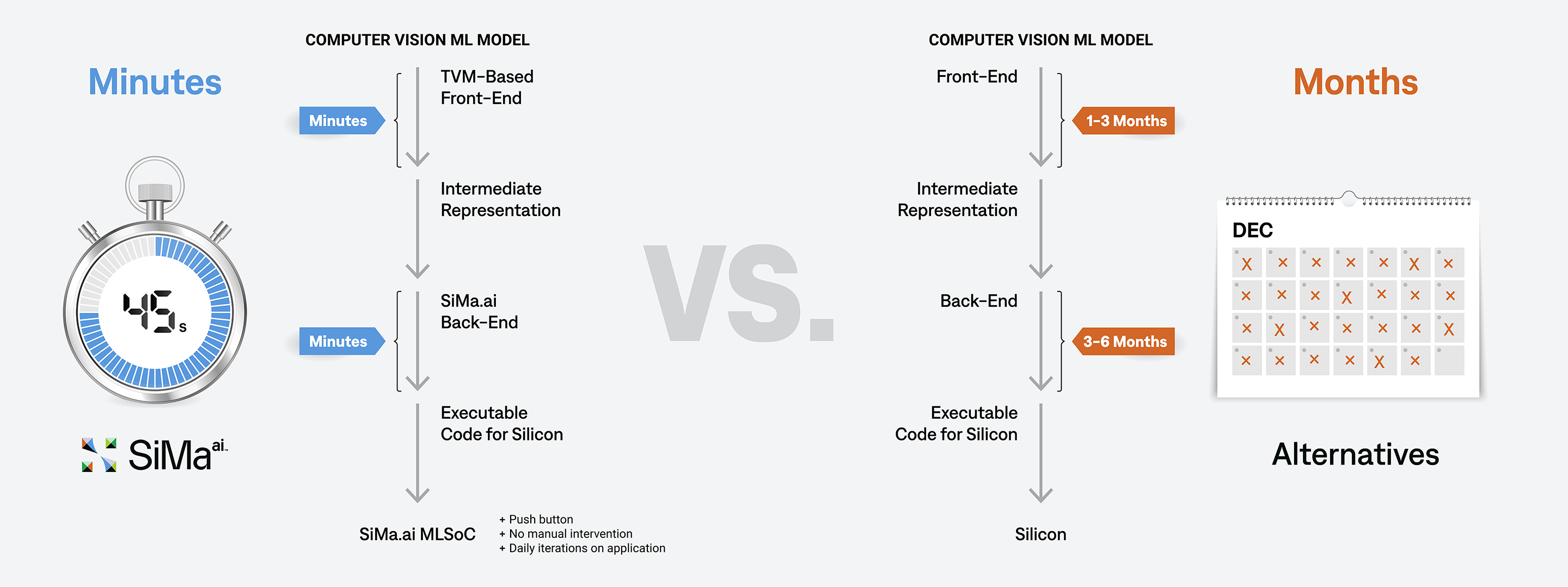

The ML industry is silicon-centric. Porting an ML application to a new platform is hard and time consuming and performance gains are uncertain. At SiMa.ai, we co-designed the software and hardware from day one. As a result, our industry-leading software suite allows you to get results, all within a matter of minutes with a simple push of a button and without the need to hand optimize your application – saving you months of development time.

HERE’S HOW WE DO IT

We listened to our customers and it was clear that we had to make the software experience push-button. Our innovative software front-end automatically partitions and schedules your entire application across all of the MLSoC™ compute subsystems.

Customers can leverage our software APIs to generate highly optimized MLSoC code blocks that are automatically scheduled on these subsystems. We created a suite of specialized and generalized optimization and scheduling algorithms for our back-end compiler. These algorithms automatically convert your ML network into highly optimized assembly code that runs on the Machine Learning Accelerator (MLA). No manual intervention needed for improved performance.

Your entire application can be deployed to the SiMa.ai MLSoC with a push of a button. Build and deploy your application in minutes in a truly effortless experience.